How I Accidentally Became #2 VDP* Researcher for Germany on HackerOne

A candid story about building iteratively with Claude Code and ending up on the HackerOne VDP leaderboard for Germany.

* VDP = Vulnerability Disclosure Program

What we will cover in the next 25 minutes

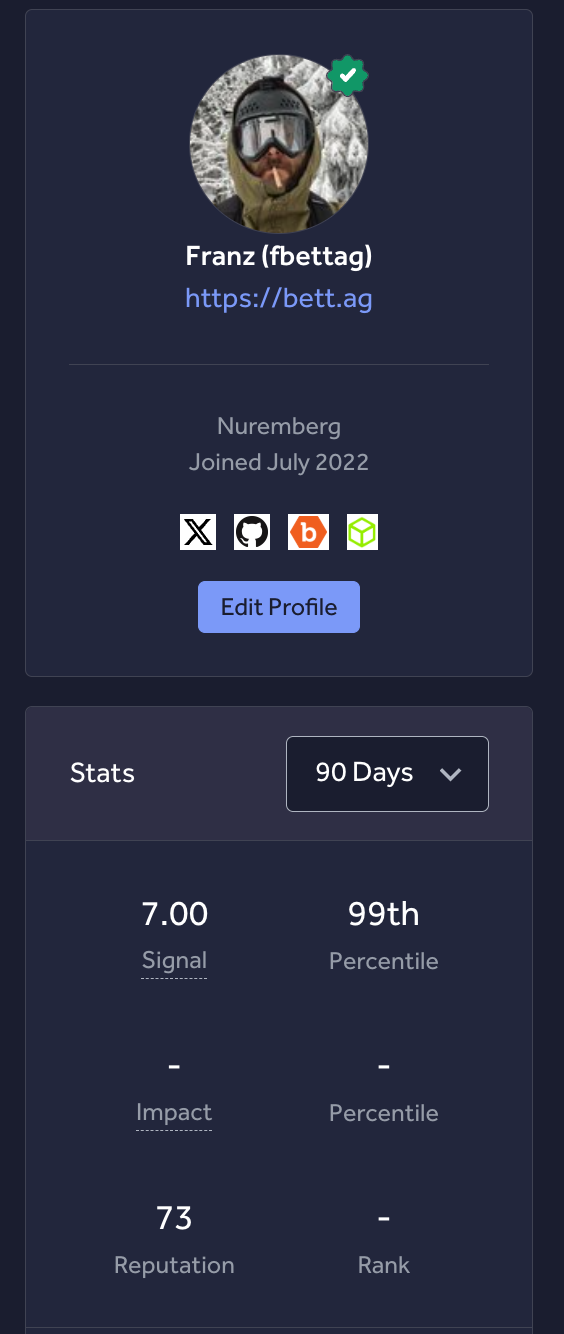

Franz Bettag Polyglot engineer since 2001

25 years of consulting for enterprise to Fortune 500. On-prem, cloud, AI, security, and development — paid projects in 30+ languages before AI came around.

Sole IT Engineer next to the CEO. Re-developed the platform from 2014–2019 serving 30M+ monthly users, then exited to eBay.

Tasked to fix a critical performance bottleneck. Found a $2M+ accounting error while rewriting the system. Cut monthly job runtime from 2+ hours to 5 minutes.

Rebuilt flash-sale push notification infra for the largest fashion retailer in the US. 10M+ concurrent pushes, delivery cut from 2 days to under 10 minutes.

If you recognize my last name, it's probably because my grandfather invented the BobbyCar and was the founder of BIG Spielwaren.

Build me a billion dollar business.

Make no mistakes!Find me a $100k bug bounty!

No false positives!The ranking followed real shipped findings

One canonical backend. Distributed execution.

Central server

State, orchestration, findings, submissions, operator UI.

Agents = identity + method

Full session prompts: exploit-web-attacking, recon-subdomain-discovery, audit-finding-verification, …

Skills = composable building blocks

Reusable techniques: subdomain-takeover-detection, nuclei-scanning, playwright, sqlmap, …

4 pwn hosts + shared CLI

A shared bounty-cli standardizes every host run.

NixOS + flakes

Deterministic infra, reproducible deploys, auditable config.

Validation-first state machine

Every risky phase emits an artifact that must pass a gate.

The shape changed as the constraints became obvious

Start with the smallest useful slice

Central server first. One source of truth.

MCP was useful, then expensive

Good for early iteration. Bad for token and context efficiency. Replaced with a shared Go CLI.

Push execution to workers

Parallel campaigns across architectures: Linux for web & Android, macOS for BinDiff fuzzing, iOS firmware diffing & Simulator app testing.

Separate agents from skills

Agents own the session identity. Skills are composable tools agents invoke. Clear contract between the two.

MCP burns tokens. A CLI + skills doesn't.

Model → tool schema → inference → tool call → result → inference → …

High token cost per stepPrompt → agent runs locally → skills execute in-process → results to server

Tokens only for reasoningOpera SIP takeover: why recon automation matters

_sip._tcp.opera.com. 86400 IN SRV 0 0 5060 e1.viju.vc.

_sips._tcp.opera.com. 86400 IN SRV 0 0 5061 e1.viju.vc.e1.viju.vc — a domain that no longer existed.

viju.vc.

viju.vc manually, pointed e1.viju.vc at my infrastructure, and confirmed the takeover.

Every session is a new hire

Project rules, coding conventions, architecture overview. Loaded automatically every session.

Learnings from past sessions. Mistakes, patterns, decisions the agent shouldn't repeat or forget.

The specific task. Short, precise, assumes the agent already knows the context from the layers above.

Claude Code was useful because I constrained it

Scope limits hallucination. Smaller context = better output.

“You are done when X passes.” Define success criteria upfront.

CLAUDE.md documents project rules. Memory retains learnings across sessions.

Split independent work across agents. Recombine only validated outputs.

This is the portable pattern: phase separation with gates

Discovery

Map the surface and emit concrete candidate artifacts.

Execution

Probe inside a bounded role with explicit success criteria.

Validation

Reject weak artifacts before they contaminate later phases.

Composition

Chain only validated pieces into a higher-value outcome.

Feedback

Update prompts, routing rules, and profiles from misses.

Ship a CI/CD pipeline from zero to deployed

Discovery

“Investigate this repo. How is it built? What framework, what output? Create an initial git commit if there isn't one.”

Execution GitLab

“Build a GitLab CI/CD pipeline with Auto DevOps for Kubernetes deployment. Look at similar projects for reference.”

Execution GitHub

“Set up GitHub Actions to build a Docker image and deploy to Kubernetes. Look at similar projects for reference.”

Validation

“Commit, push, and check the pipeline status. If it fails, read the logs and fix it. Repeat until the pipeline is green.”

Composition

Build passes → image pushed → deploy triggered → pods running. Each stage gates the next.

Feedback

curl https://your-domain.com returns 200. If not, iterate. You are done when the site is live.

How to copy this tomorrow

Ship a feature with /code

9 agents. One command.

Discovery

/code <feature> — the Architect agent asks up to 5 clarifying questions, then designs schemas, contexts, LiveViews, and routes.

Execution

Feature Developer implements the following 2,280 lines of project patterns — domain-driven design, typed schemas, LiveView sessions, Petal UI components.

Validation

Feature Tester runs mix quality. Feature Translator wraps all user-facing strings in gettext and translates to every supported locale.

Composition

Feature Committer analyzes the diff, determines conventional commit type and scope. No commit without green tests. Each agent gates the next.

Feedback

Higher-order commands (/gdpr-audit, /audit-legal, /public-launch) re-run audits after /code. Findings become new /code runs.

GitLab v15 → v18 on Kubernetes. Overnight. Unattended.

Discovery

“Explore our current setup in read-only mode on this Kubernetes cluster, then do a full backup.”

Execution

“Research the best upgrade path. Do not skip intermediary steps. Verify dependencies and requirements for the new versions.”

Validation

“Start the upgrade procedure. You are done when all services that are currently running and up are successfully upgraded and responding.”

Composition

Each intermediary version (v15 → v16 → v17 → v18) is a validated artifact. PostgreSQL migrated in lockstep. Only proceed when the previous hop is healthy.

Feedback

Failures at any hop feed back into the next attempt. The agent retries with context, not from scratch.

Don’t start with one giant prompt. Start with a loop.

Security is the example. The architecture is the reusable asset.